|

Changan Chen1,2,

Sagnik Majumder1,

Ziad Al-Halah1,

Ruohan Gao1,2,

Santhosh Kumar Ramakrishnan1,2, Kristen Grauman1,2 |

|

1UT Austin,2Facebook AI Research Accepted at ICLR 2021 |

|

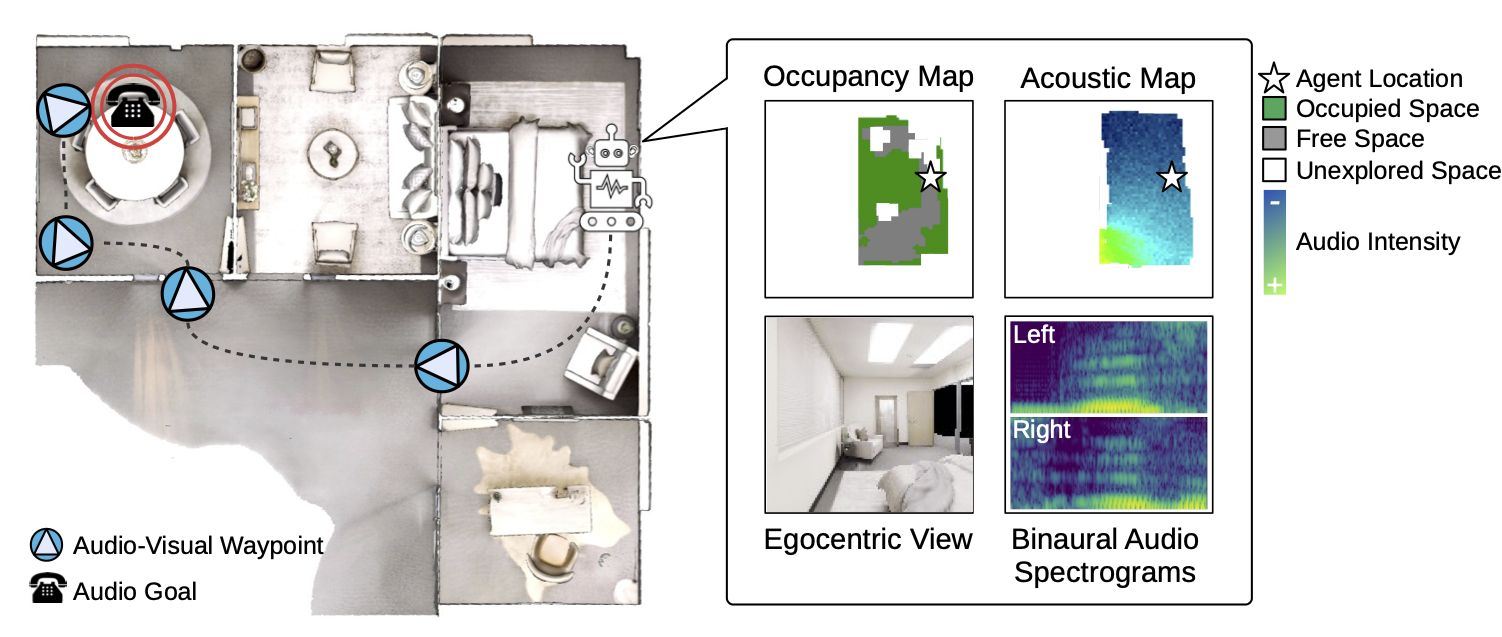

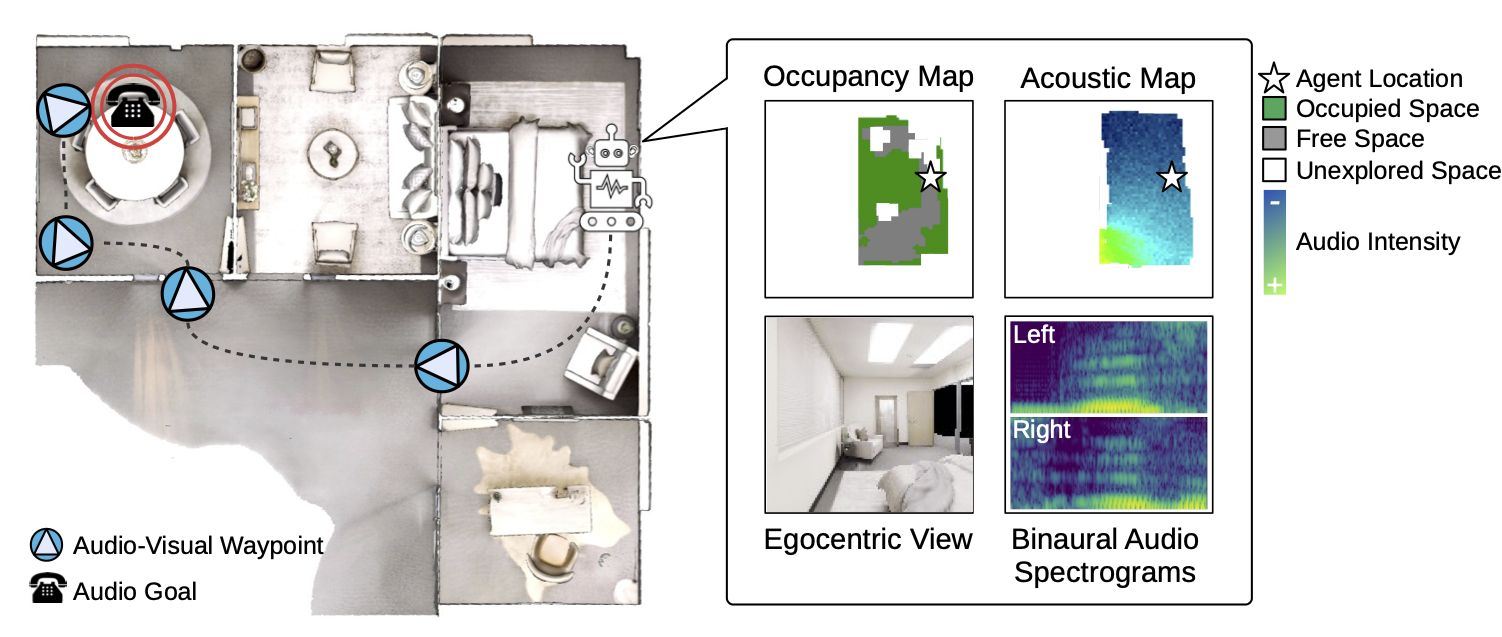

| In audio-visual navigation, an agent intelligently travels through a complex, unmapped 3D environment using both sights and sounds to find a sound source (e.g., a phone ringing in another room). Existing models learn to act at a fixed granularity of agent motion and rely on simple recurrent aggregations of the audio observations. We introduce a reinforcement learning approach to audio-visual navigation with two key novel elements: 1) waypoints that are dynamically set and learned end-to-end within the navigation policy, and 2) an acoustic memory that provides a structured, spatially grounded record of what the agent has heard as it moves. Both new ideas capitalize on the synergy of audio and visual data for revealing the geometry of an unmapped space. We demonstrate our approach on two challenging datasets of real-world 3D scenes, Replica and Matterport3D. Our model improves the state of the art by a substantial margin, and our experiments reveal that learning the links between sights, sounds, and space is essential for audio-visual navigation. |

|

In this video, we walk you over our audio-visual waypoints for navigation project with highlights on our

motivation and contributions. We also show demos of the acoustic simulation as well as the agents'

navigation performance.

|

|

|

| (1) Changan Chen, Sagnik Majumder, Ziad Al-Halah, Ruohan Gao, Santhosh Kumar Ramakrishnan, Kristen Grauman. Learning to Set Waypoints for Audio-Visual Navigation. In ICLR 2021 [Bibtex] |

| (2) Changan Chen*, Unnat Jain*, Carl Schissler, Sebastia Vicenc Amengual Gari, Ziad Al-Halah, Vamsi Krishna Ithapu, Philip Robinson, Kristen Grauman. SoundSpaces: Audio-Visual Navigation in 3D Environments. In ECCV 2020 [Bibtex] |

| UT Austin is supported in part by DARPA Lifelong Learning Machines. |

| Copyright © 2020 University of Texas at Austin |