| Sagnik Majumder1, Ziad Al-Halah1, Kristen Grauman1,2 |

|

1UT Austin,2Facebook AI Research Accepted to ICCV 2021 |

|

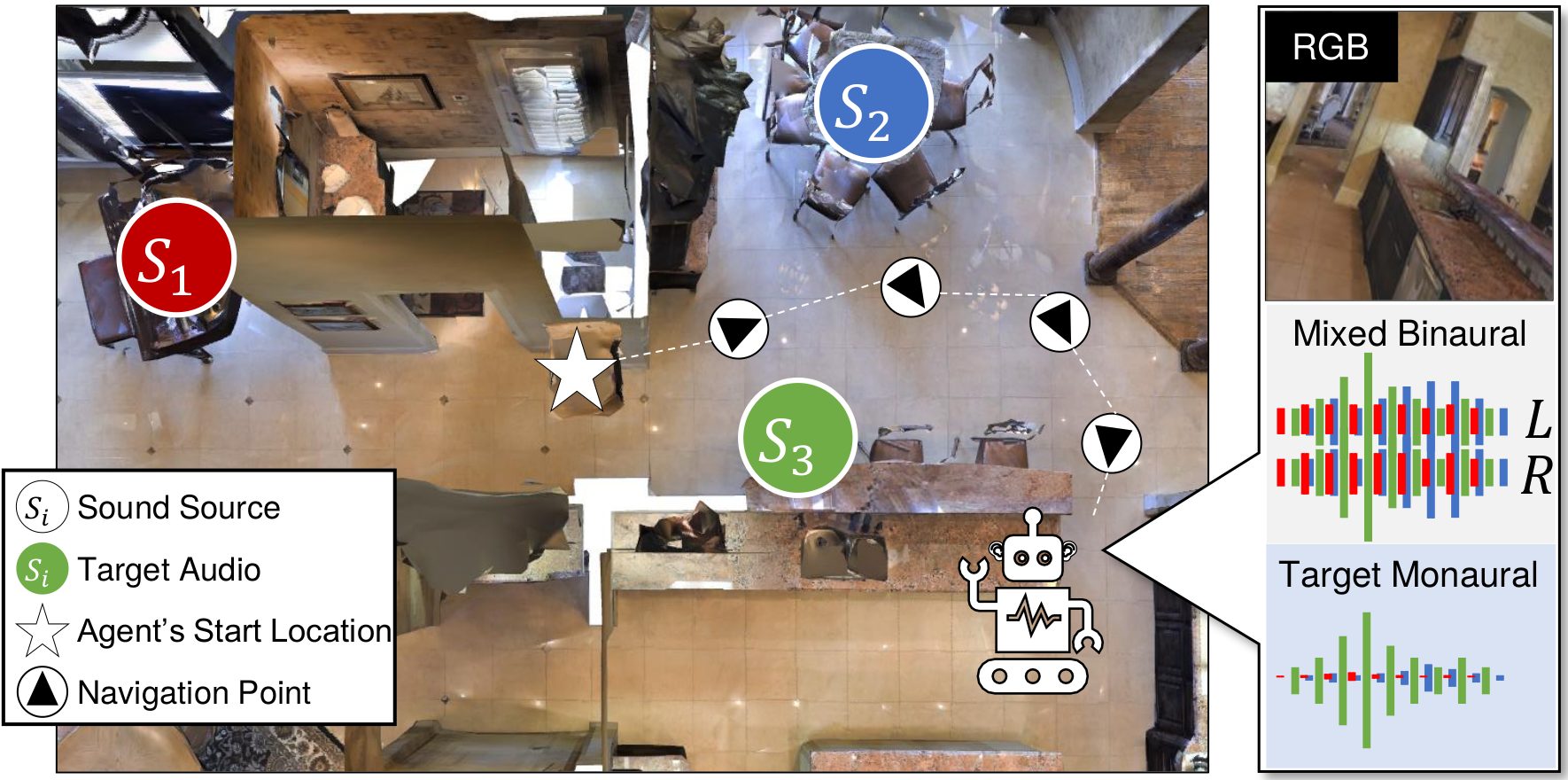

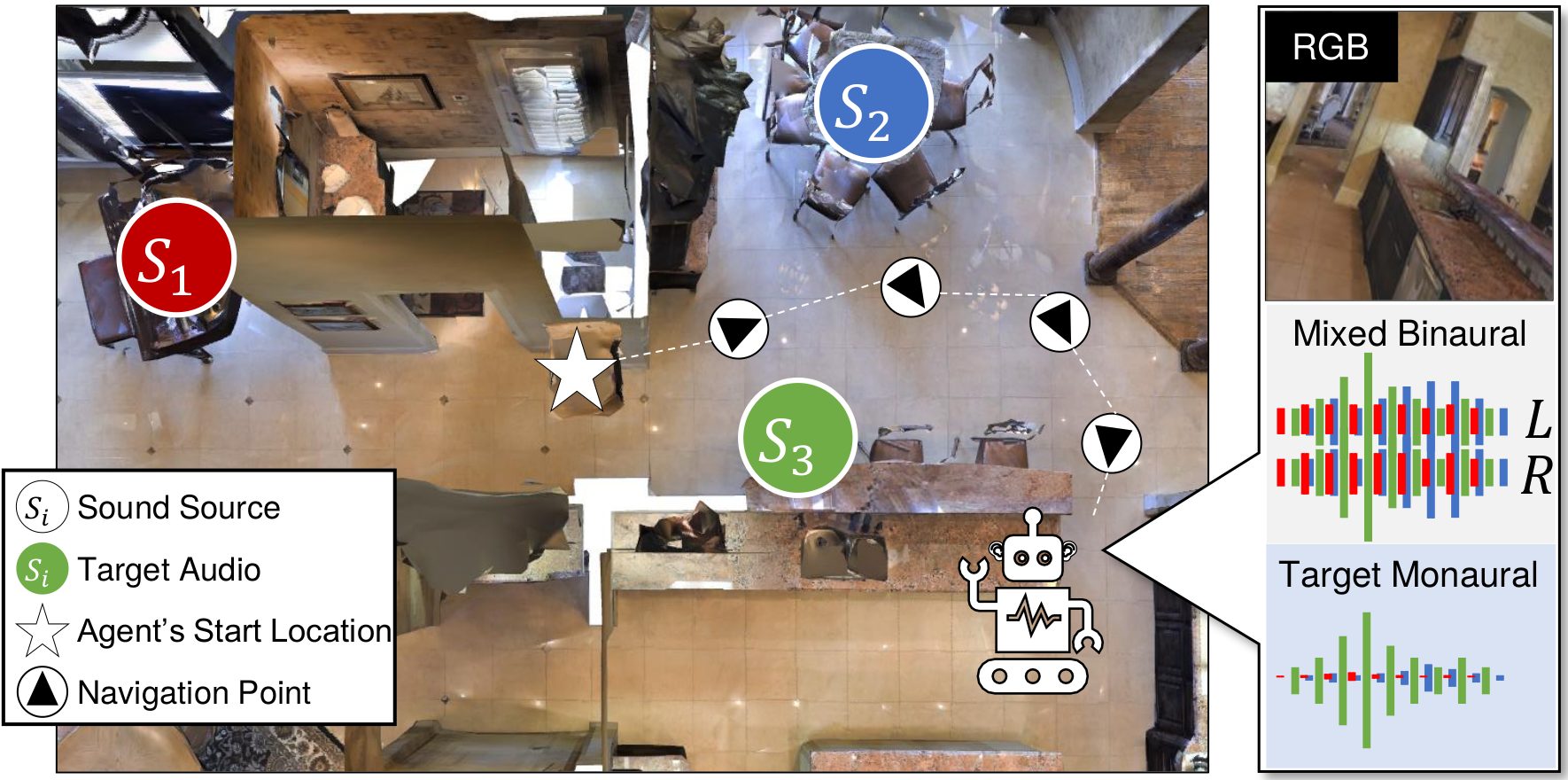

| We introduce the active audio-visual source separation problem, where an agent must move intelligently in order to better isolate the sounds coming from an object of interest in its environment. The agent hears multiple audio sources simultaneously (e.g., a person speaking down the hall in a noisy household) and it must use its eyes and ears to automatically separate out the sounds originating from a target object within a limited time budget. Towards this goal, we introduce a reinforcement learning approach that trains movement policies controlling the agent's camera and microphone placement over time, guided by the improvement in predicted audio separation quality. We demonstrate our approach in scenarios motivated by both augmented reality (system is already co-located with the target object) and mobile robotics (agent begins arbitrarily far from the target object). Using state-of-the-art realistic audio-visual simulations in 3D environments, we demonstrate our model's ability to find minimal movement sequences with maximal payoff for audio source separation. |

|

Simulation demos and navigation examples.

|

|

|

@InProceedings{Majumder_2021_ICCV,

author={Majumder, Sagnik and Al-Halah, Ziad and Grauman, Kristen}

title={Move2Hear: Active Audio-Visual Source Separation},

booktitle = {Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV)},

month={October},

pages={275-285},

year={2021},

}

|

| UT Austin is supported in part by ONR PECASE N00014-15-1-2291, DARPA L2M, and the UT Austin IFML NSF AI Institute. Thanks to Ruohan Gao for help with initial experimental setup and to Changan Chen, Ruohan Gao, and Santhosh Kr. Ramakrishnan for feedback on paper drafts. |

| Copyright © 2021 University of Texas at Austin |