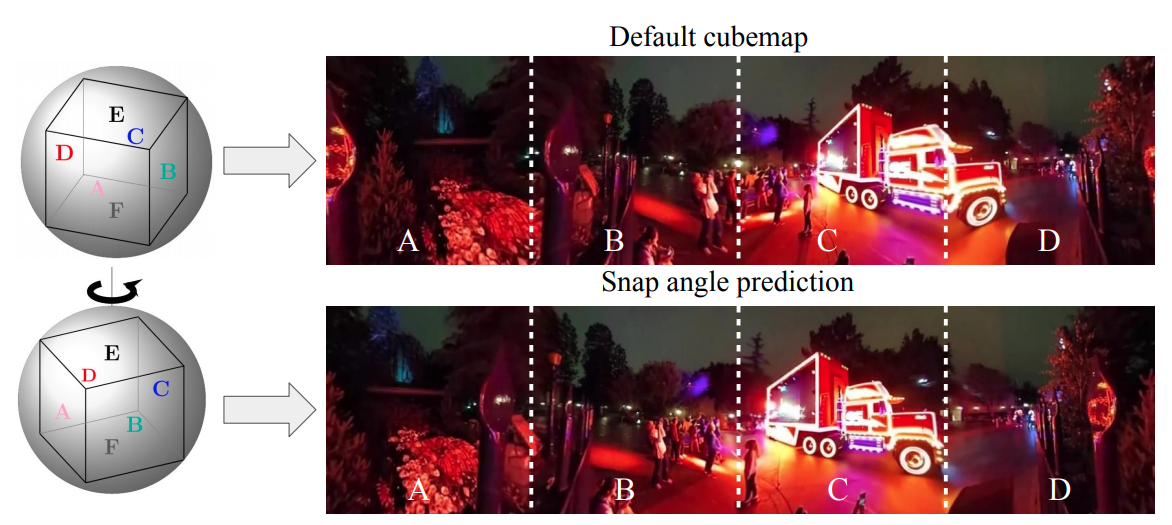

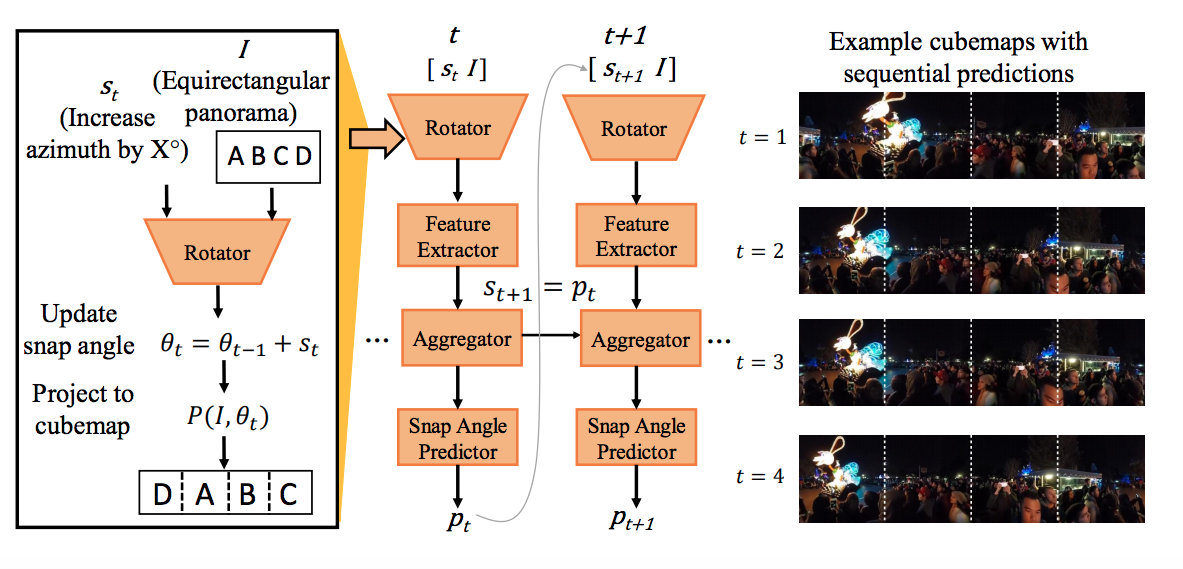

Viewing 360° content presents its own challenges. All prior automatic content-based projection methods implicitly assume that the viewpoint of the input 360° image is fixed. We propose to eliminate the fixed viewpoint assumption. Our key insight is that an intelligently chosen viewing angle can immediately lessen distortions, even when followed by a conventional projection approach.