| Kumar Ashutosh1,2, Zihui Xue1,2, Tushar Nagarajan2, Kristen Grauman1,2 |

|

1UT Austin, 2FAIR, Meta CVPR 2024 Highlight Paper (Top 2.8%) |

|

|

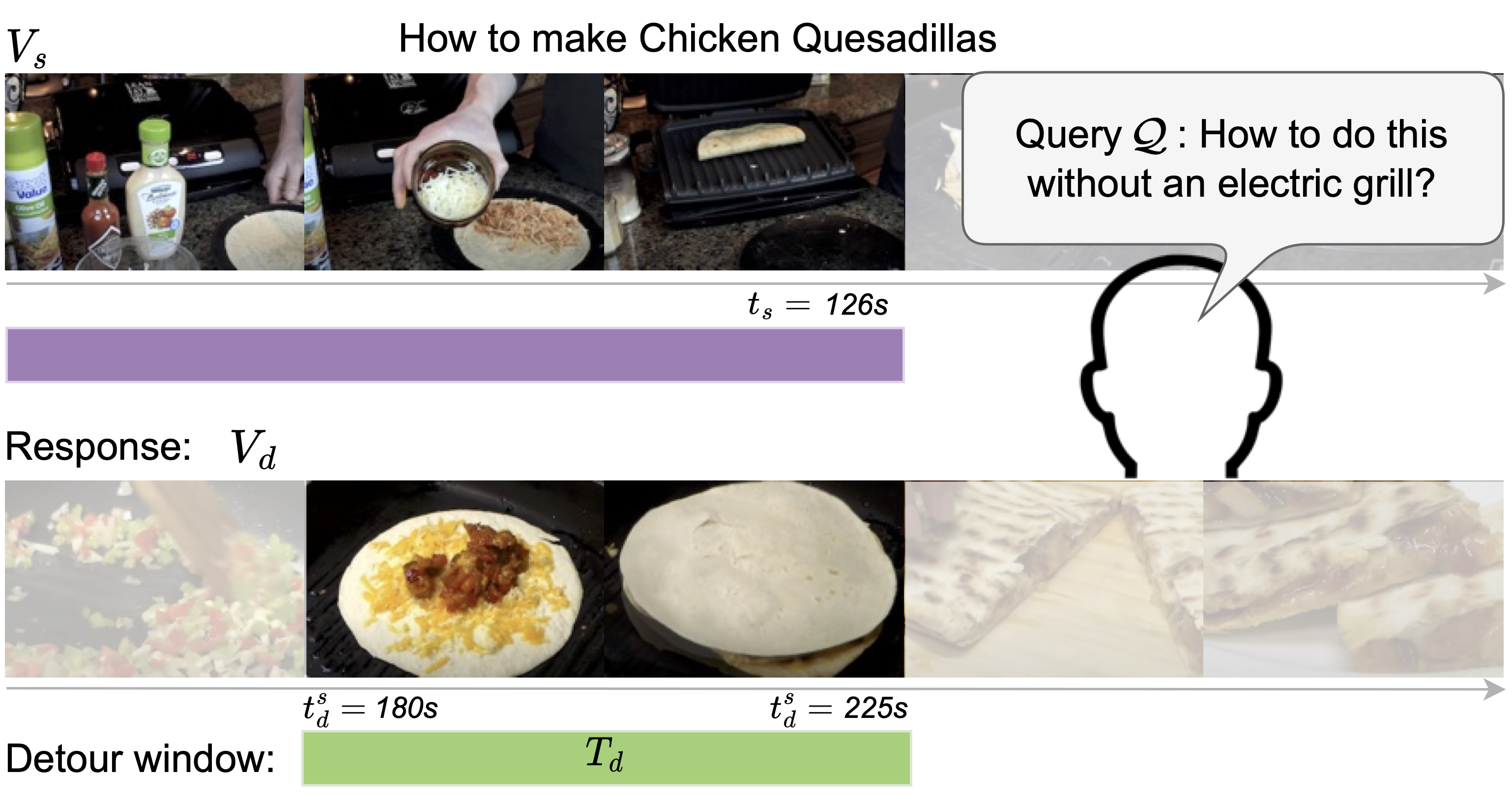

| We introduce the video detours problem for navigating instructional videos. Given a source video and a natural language query asking to alter the how-to video's current path of execution in a certain way, the goal is to find a related ''detour video'' that satisfies the requested alteration. To address this challenge, we propose VidDetours, a novel video-language approach that learns to retrieve the targeted temporal segments from a large repository of how-to's using video-and-text conditioned queries. Furthermore, we devise a language-based pipeline that exploits how-to video narration text to create weakly supervised training data. We demonstrate our idea applied to the domain of how-to cooking videos, where a user can detour from their current recipe to find steps with alternate ingredients, tools, and techniques. Validating on a ground truth annotated dataset of 16K samples, we show our model's significant improvements over best available methods for video retrieval and question answering, with recall rates exceeding the state of the art by 35%. |

|

|

|

|

@article{ashutosh2024detours,

title={Detours for Navigating Instructional Videos},

author={Ashutosh, Kumar and Xue, Zihui and Nagarajan, Tushar and Grauman, Kristen},

journal={arXiv preprint arXiv:2401.01823},

year={2024}

}

|

| UT Austin is supported in part by the IFML NSF AI Institute. KG is paid as a research scientist by Meta. We thank Suyog Jain, Austin Miller, Honey Manglani and Robert Kuo for help with the data collection. |

| Copyright © 2024 University of Texas at Austin |