| Sagnik Majumder1,2, Ziad Al-Halah3, Kristen Grauman1,2 |

|

1UT Austin,2FAIR at Meta,3U. Utah Accepted to CVPR 2024 |

|

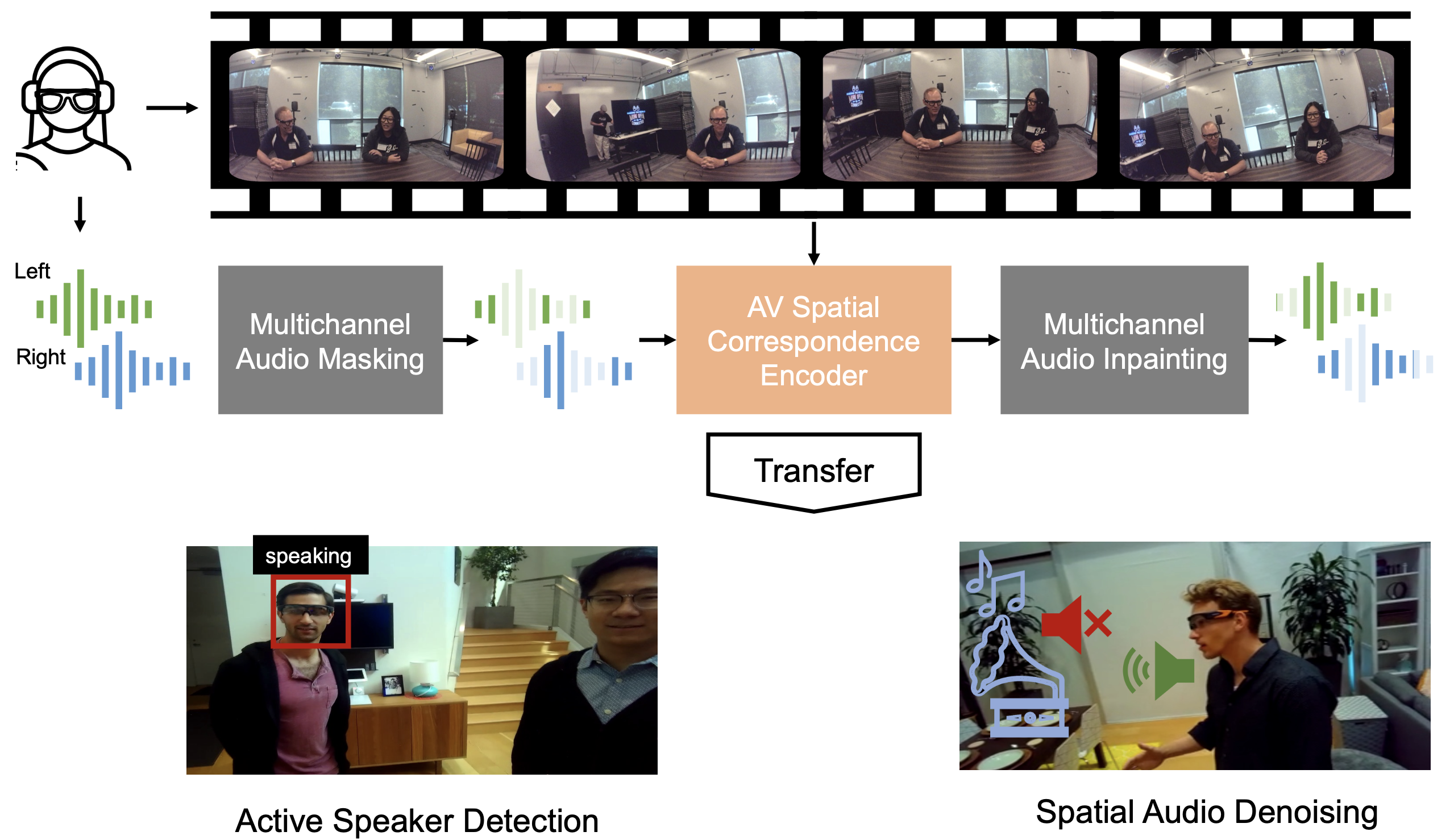

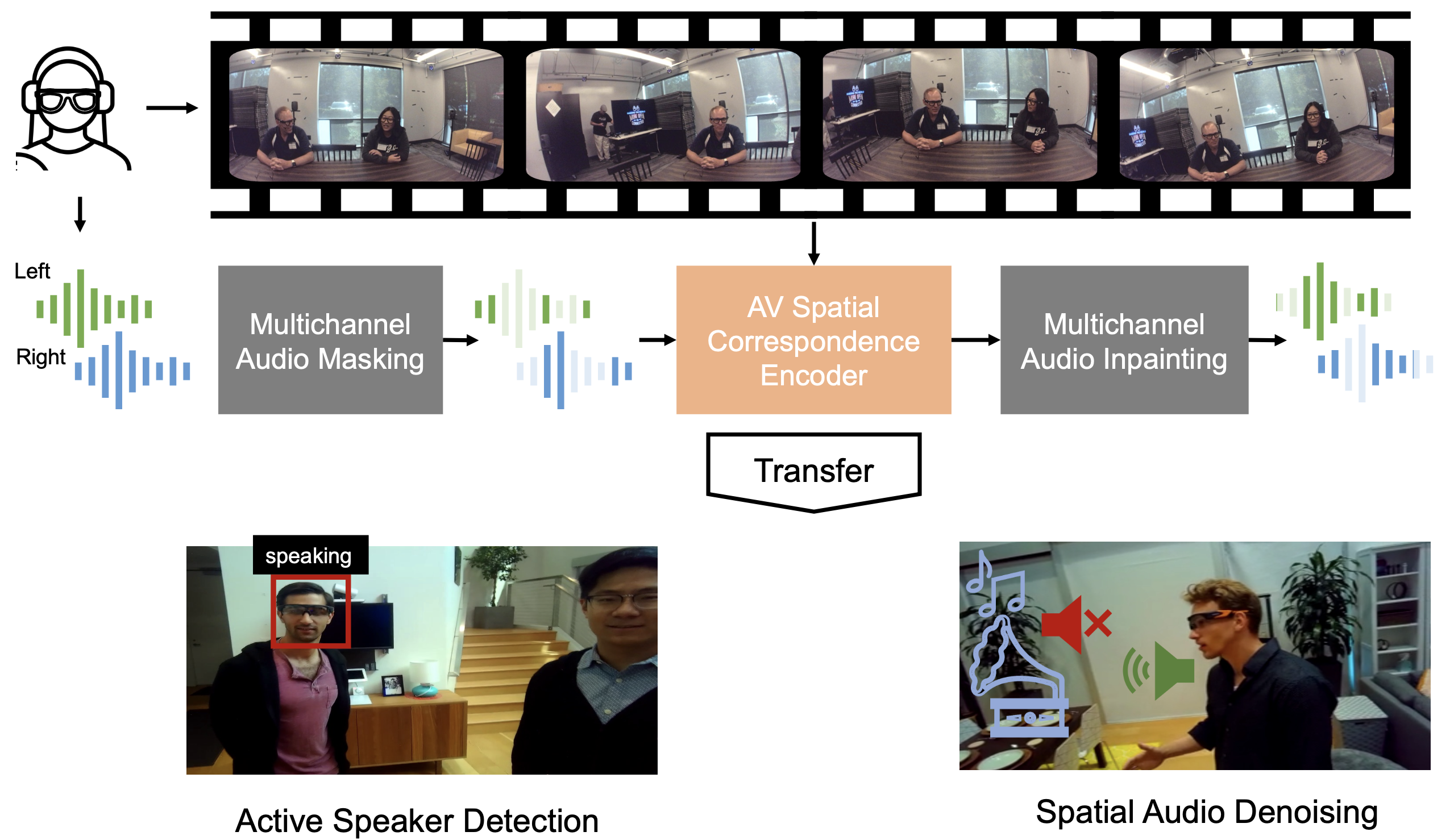

| We propose a self-supervised method for learning representations based on spatial audio-visual correspondences in egocentric videos. Our method uses a masked auto-encoding framework to synthesize masked binaural (multi-channel) audio through the synergy of audio and vision, thereby learning useful spatial relationships between the two modalities. We use our pretrained features to tackle two downstream video tasks requiring spatial understanding in social scenarios: active speaker detection and spatial audio denoising. Through extensive experiments, we show that our features are generic enough to improve over multiple state-of-the-art baselines on both tasks on two challenging egocentric video datasets that offer binaural audio, EgoCom and EasyCom. |

|

Task and model description, dataset samples, prediction examples and failure cases.

|

|

|

@inproceedings{majumder2024learning,

title={Learning spatial features from audio-visual correspondence in egocentric videos},

author={Majumder, Sagnik and Al-Halah, Ziad and Grauman, Kristen},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

pages={27058--27068},

year={2024}

}

|

| Copyright © 2023 University of Texas at Austin |